Then I started trying to figure out how to describe it and adapt it to general use, and I found myself adding more and more caveats and limitations and internal assumptions. It's grown organically to do what is needed but what I have available isn't really designed for general use. Some of it I couldn't understand just from reading and observing (since I'm kind of a hands on break-it-to-understand-it kind of guy). Time to start taking it apart so I can put it back together. When I can do that and it starts up when I turn the key, then I can claim to understand it.

So I decided to go back to the fundamentals not of OpenShift, but of AWS EC2 itself.

Defining a Goal: Machines Ready To Eat

An OpenShift service consists of a number of component services. Ideally each component would have multiple instances for availability and scaling, but that's not required for initial setup. Only the OpenShift broker, console and nodes need to be exposed to the users.

The host configuration is complex enough that even for a small service it is best to use a Configuration Management System (CMS) to configure and manage the system, but the CMS can't start work until the hosts exist and have network communications. The CMS itself must be installed and configured. Once the hosts exist and are bound together then the CMS can do the rest of the work and a clean boundary of control and access is established. This will later allow the bottom layer (establishing hosts and installing/configuring the CMS) to be replaced without affecting the actual service installation above.

So the goal here is: create and connect hosts with a CMS installed using EC2. That's the base on which the OpenShift service will be built. If you run each of the component services on its own host using external DNS and authentication services, OpenShift requires a minimum of four hosts:

- OpenShift Broker

- Data Store (mongodb)

- Message Broker (activemq)

- OpenShift Node

Each of these can (theoretically, at least) be duplicated to provide high availability, but for now I'll start there. The goal of this series of posts is to create the hosts on which these services will be installed. We won't come back to OpenShift itself until that's done.

AWS EC2: Getting the lay of the land

If you're not familiar with AWS EC2, go check out https://aws.amazon.com . EC2 is the part of AWS which provides "virtual" hosts (for a fee, of course). There are free-to-try levels, but you are required to give a credit card to sign up and you're very likely to start incurring charges for storage even if you stick to the "free" tier. Read, be informed, decide for yourself.

AWS without the "W"

AWS presents a modern single-page web interface for all interactions, but I'm interested in command line or scripted interaction. Amazon does provide a REST protocol and has implemented libraries for a wide number of scripting languages. I'm using the rubygem-aws-sdk library (which is, surprisingly enough, written in Ruby) because I also want to use another Ruby tool called Thor.

Tasks and the Command Line Interface

Thor is a ruby library which helps create really nice command line "tasks". The beauty of Thor is that you can use it both to define individual tasks and to compose those tasks into more complex task sequences. This allows you to test each step as a distinct CLI operation and also to debug only the step that fails when one inevitably does.

I'm going to use Thor and the aws-sdk to create a CLI interface to the AWS low level operations, and then compose them to create higher level tasks which, in the end, will leave me with a set of hosts ready to receive an OpenShift service.

I'm not going to try to create a comprehensive CLI interface to AWS. I'm only going to create the steps that I need to get this job done. A number of the steps will encapsulate operations which may seem trivial, but this will allow for better consistency and visibility of the operations. A primary goal is to have as little magic as possible. At the same time, I want to avoid overwhelming the user (me) with unnecessary detail when things are working as planned.

I'm not going to make you sit through the entire development process (which isn't complete). Instead I mean to show the tools that I've developed and use them to cleanly define the base on which an OpenShift service would sit.

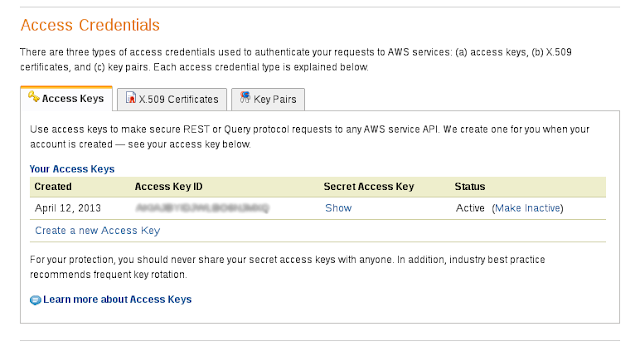

To work with AWS, you must have an established account. To use the the REST API you need to have generated a set of access keys. To log into your EC2 instances you need to have generated a set of SSH key pairs and placed them so your SSH client can find them. (Usually in $HOME/.ssh) and configure your ssh client to use those keys when logging into EC2 instances (in $HOME/.ssh/config).

AWS Setup

To work with AWS, you must have an established account. To use the the REST API you need to have generated a set of access keys. To log into your EC2 instances you need to have generated a set of SSH key pairs and placed them so your SSH client can find them. (Usually in $HOME/.ssh) and configure your ssh client to use those keys when logging into EC2 instances (in $HOME/.ssh/config).

- AWS Access Keys

- AWS SSH Key Pairs

- SSH client configuration

Origin-Setup (really EC2 and SSH tools)

The tool set is currently called origin-setup and it resides in a repository on Github. The name is a misnomer, there's not actually any OpenShift in most of it.

- Github repo URL: https://github.com/markllama/origin-setup

Requirements

The tasks are written in Ruby using the Thor library. They also require several other rubygems. All of them are available on Fedora 18 as RPMs.

- ruby

- rubygems

- rubygem-thor

- rubygem-aws-sdk

- rubygem-parseconfig

- rubygem-net-ssh

- rubygem-net-scp

Getting (and setting) the Bits

Thor can be used to create stand-alone CLI commands, but I have not done that yet for these tasks. To use them you need to

The final step is to give the origin-setup toolset the information needed to communicate with the AWS REST interface.

This file contains what is essentially the passwords to your AWS account. You should set the permissions on this file so that only you can read it and protect the contents as you would your credit card.

The RemoteUser is the default user for SSH logins (F18+). For RHEL6 it would be root. The AWSEC2Type value defines the default instance "type" to be created when you create a new instance. The t1.micro instance type is small and it is in the free tier. You will need to choose a larger type for real use.

cd into the origin-setup directory and call thor directly. You will also need to set the RUBYLIB path to find a small helper library which manages the AWS authentication.git clone https://github.com/markllama/origin-setup cd origin-setup export RUBYLIB=`pwd`/lib thor list --all

AWS Again: configuring the toolset

The final step is to give the origin-setup toolset the information needed to communicate with the AWS REST interface.

AWSAccessKeyId=YOURKEYIDHERE AWSSecretKey=YOURSECRETKEYHERE AWSKeyPairName=YOURKEYPAIRNAMEHERE RemoteUser=ec2-user AWSEC2Type=t1.micro

This file contains what is essentially the passwords to your AWS account. You should set the permissions on this file so that only you can read it and protect the contents as you would your credit card.

The RemoteUser is the default user for SSH logins (F18+). For RHEL6 it would be root. The AWSEC2Type value defines the default instance "type" to be created when you create a new instance. The t1.micro instance type is small and it is in the free tier. You will need to choose a larger type for real use.

Turn the Key

You should be able to use the thor command to explore the list of available tasks. Thor allows the creation of namespaces to contain related tasks. Most of the important tasks to begin with are in the ec2 namespace.

You can see the available tasks with the thor list command:

It's time to see if you can talk to EC2. This first query requests a list of images produced by the Fedora hosted team:

If instead you get a really long messy ruby error, then check the permissions and contents of your ~/.awscred file.

It's probably a good idea, before experimenting too much here to go get familar with EC2 and Route53 using the web console a bit.

Next post I'll establish the DNS zone in Route53 and show how to manage DNS records to prepare for my OpenShift service.

You can see the available tasks with the thor list command:

thor list ec2 --all ec2 --- thor ec2:image:create # Create a new imag... thor ec2:image:delete # Delete an existin... thor ec2:image:find TAGNAME # find the id of im... thor ec2:image:info # retrieve informat... thor ec2:image:list # list the availabl... thor ec2:image:tag --tag=TAG # set or retrieve i... thor ec2:instance:create --image=IMAGE --name=NAME # create a new EC2 ... thor ec2:instance:delete # delete an EC2 ins... thor ec2:instance:hostname # print the hostnam... thor ec2:instance:info # get information a... thor ec2:instance:ipaddress [IPADDR] # set or get the ex... thor ec2:instance:list # list the set of r... thor ec2:instance:private_hostname # print the interna... thor ec2:instance:private_ipaddress # print the interna... thor ec2:instance:rename --newname=NEWNAME # rename an EC2 ins... thor ec2:instance:start # start an existing... thor ec2:instance:status # get status of an ... thor ec2:instance:stop # stop a running EC... thor ec2:instance:tag --tag=TAG # set or retrieve i... thor ec2:instance:wait # wait until an ins... thor ec2:ip:associate IPADDR INSTANCE # associate and Ela... thor ec2:ip:associate IPADDR INSTANCE # associate and Ela... thor ec2:ip:create # create a new elas... thor ec2:ip:delete IPADDR # delete an elastic IP thor ec2:ip:list # list the defined ... thor ec2:securitygroup:create NAME # create a new secu... thor ec2:securitygroup:delete # delete the securi... thor ec2:securitygroup:info # retrieve and repo... thor ec2:securitygroup:list # list the availabl... thor ec2:securitygroup:rule:add PROTOCOL PORTS [SOURCES] # add a permission ... thor ec2:snapshot:delete SNAPSHOT # delete the snapshot thor ec2:snapshot:list # list the availabl... thor ec2:volume:delete VOLUME # delete the volume thor ec2:volume:list # list the availabl...

It's time to see if you can talk to EC2. This first query requests a list of images produced by the Fedora hosted team:

thor ec2:image list --name \*Fedora\* --owner 125523088429 ami-2509664c Fedora-x86_64-17-1-sda ami-4b0b6422 Fedora-i386-17-1-sda ami-6f640c06 Fedora-i386-18-20130521-sda ami-b71078de Fedora-x86_64-18-20130521-sda ami-d13758b8 Fedora-18-ec2-20130105-x86_64-sda ami-dd3758b4 Fedora-18-ec2-20130105-i386-sda ami-ed375884 Fedora-17-ec2-20120515-i386-sda ami-fd375894 Fedora-17-ec2-20120515-x86_64-sda

If instead you get a really long messy ruby error, then check the permissions and contents of your ~/.awscred file.

It's probably a good idea, before experimenting too much here to go get familar with EC2 and Route53 using the web console a bit.

Next post I'll establish the DNS zone in Route53 and show how to manage DNS records to prepare for my OpenShift service.

References

- AWS EC2 Console - managing remote virtual machines

- AWS Route53 (DNS) Console - managing DNS

- rubygem-aws-sdk - an implimentation of the AWS REST protocol in Ruby

- SSH publickey - secure login without passwords

- Thor - A ruby gem to build command line interface "tasks"

- Puppet - A popular Configuration Management System

- Git - a popular Source Code Management system

- Github - a site for keeping Git repositories

- origin-setup - a set of Thor tasks for managing AWS EC2 and Route53

With a goal of automating the creation of an OpenShift Origin service in EC2

Will OpenShift work on t1.micro instance(s)? I will use it just for tests and to get some knoweldge, not for real production environment. I'm asking because I've tried to run OpenShift on one vm on my laptop and 503 errors appeared (I'm almost sure that it was becouse of response times).

ReplyDeleteNot on a micro. Just starting up the mysql daemon will eat up a micro. I think the smallest test instance is an m1.medium.

DeleteAnd micro is also not suitable if I run Broker, Mongo, etc on separate machines? I'd like to run OpenShift on free tier, but not sure If it's worth trying.

DeleteIs there a way to create node instances automatically right before the code and the stack are deployed?

ReplyDeleteBasically I do not want to have all nodes pre-provisioned before apps can be deployed.

I don't know the answer to that question. When I was writing these posts the answer was "no". At that time our OpenShift ops team would provision new nodes as they determined the load needed it, but that was not an automatic operation. I haven't worked on the OpenShift Hosted in many months now so I can't say if they have created and published an auto-provisioning system for nodes in AWS.

Deletedev@lists.openshift.redhat.com and #openshift-dev on freenode IRC

.awscred : AWSSecretKey has been changed to AWSSecretAccessKey

ReplyDelete